-

Technical Specs

-

Performance

-

Value for Money

SUMMARY: If your Intel CPU supports Quick Sync, and if your motherboard lets you boot into running internal graphics simultaneous with your dedicated GPU, then your encoding times for H.264 & H.265 files should go dramatically faster.

Anyone who uses Creative Cloud software has got either a love/hate relationship with Adobe, or doesn’t know any better. Premiere keeps bloating like a bad all-you-can-eat buffet, even as it leaves unforgivable major bugs alone for months and sometimes even years. Their Q1’2018 profits are rocketing out of orbit, just as they’ll be jacking up your subscription cost this month. Every year when the NAB Show rolls in, Adobe rushes to market a new line-up of added features, mostly fashionable things that drive fringe industries and deployments like VR. They do boast each time about bug fixes, which is actually a way to see Adobe finally admit publicly that the program’s basic features are a mess. Some highlights in their latest April 2018 fix list include “Crash when playing some files at 1/2 resolution,” “All frames are dropped on playback of an HEVC clip when playback is set to less than Full resolution,” etc. That’s not to mention Lumetri being the #1 reason for failed exports. And then, at this link, they admit to some current major, unfixed bugs: and that’s just from day one, before hundreds more will accumulate until the next update. All this adds new meaning to the term “upgrade,” which really amounts to “degradation” in Adobeland.

But amid that usual madness, Adobe introduced two stunning features this week. One of them has been reported endlessly, like a reprinted press release: a new comparison view in the Program Monitor, combined with “Sensei”-driven automated color matching between shots. It’s really incredible for color grading and it’s long-overdue. But what absolutely no one has written about, is a short blurb way down their list, that’s actually the most important productivity boost they’ve added in years.

Intel Hardware Acceleration of H.264 & HEVC Encoding

When you click this link, you’ll see a table at Intel’s website of every CPU that supports a technology called Quick Sync. If your Windows or Mac computer has one of those listed CPUs installed, the next thing to check is whether your motherboard allows you to simultaneously enable the internal Intel graphics driver, and any dedicated GPU (such as GeForce or Radeon). By default, the BIOS of most motherboards (a configuration screen you can access before booting into the OS) sets internal graphics to “Auto,” which actually means that if you have a dedicated GPU installed, Intel UHD/HD Graphics gets disabled. Some (but not all) motherboards allow you to force-enable internal graphics, and then it’s possible — though not guaranteed — you can boot into the OS with both internal and dedicated graphics acceleration active at the same time.

Of course, Adobe explains none of this, anywhere, underestimating how their new feature won’t be available to the vast majority of users without some “hacking” as described here. (Probably, true to lazy form, Adobe just doesn’t want to deal with anything remotely related to hardware — as if you can separate hardware from software development.) Same for the new and crowded majority of Adobe-lobbied “filmmaking” (i.e., wedding videography) bloggers writing about this release, too.

In the above screen capture, you can see how it looks, whenever a CPU’s internal graphics with Quick Sync actually works; yet in most cases, you’ll get “Software Only” in the new Encoding Settings/Performance field (“Hardware Accelerated” grayed out) if you have a dedicated GPU like most people.

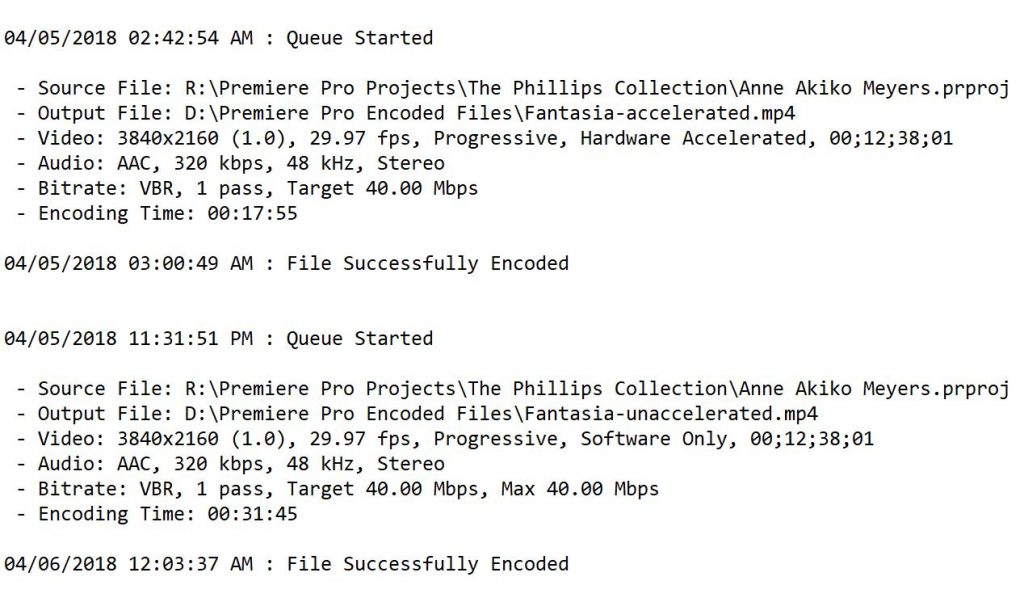

But let’s say you do get the killer combo of a supported CPU, and simultaneous internal and external graphics enabled into a full OS boot. Great! Let’s see how much better it performs, both from a direct export within the Adobe Premiere application, and equally when that export runs through Adobe Media Encoder.

Here’s my case study. The above video represents a fairly intensive amount of churning at export. There are three camera angles using very different codecs, from a Sony a7S II’s UHD XAVC-S to a Panasonic GH4’s UHD AVC to a Blackmagic Pocket Cinema Camera’s HD 10-bit ProRes 4:2:2. All of them are graded with Lumetri in combination with FilmConvert, outputting to UHD. So, this is reasonably heavy lifting.

The system I built has a Core i7-6700K CPU (that supports Quick Sync, per the reference table, using Intel HD Graphics 530) supported by 32gb of DRAM on a Gigabyte Z170X motherboard that allows me to force-enable internal graphics even while I am running a Nvidia GeForce GTX 1060 6gb graphics card. In effect (and this is ironic, given the current retail crisis in the GPU industry), it’s like the internal graphics is only data mining for the “cryptocurrency” of H.264 acceleration, without even being connected to and driving a monitor.

From the above results, you can see that the hardware accelerated export of the exact same content went about twice as fast. Let’s look at another test.

With the same multi-camera configuration and effects as the last one, here I’m getting slightly less of a difference in export speeds, but that’s explained by the much shorter running time. I note that this pair of exports drew from camera sources on a regular hard drive, rather than an SSD in the prior example, just to add variety.

Clearly, these examples portend a huge acceleration of our exports, to the most common codec in the universe. Seriously, it’s the rare exception that we export to anything but H.264. One theoretical boogeyman worth mentioning, that has always hung over hardware-accelerated exports, is that they can allegedly do a barely perceptible worse job (e.g., digital noise artifacts) in comparison to the lighter lifting of the Mercury Playback Engine alone; meantime you’ll occasionally find the errant random report that software rendering actually looks worse. I score it all as needless pixel-peeping, in comparison to the extraordinary benefits of faster exports. So far, I’ve never seen any visual flaw attributable to a hardware-accelerated render.

Encoding vs. Decoding: there’s a big difference (of course)

But hold on: haven’t we seen Intel acceleration before? Take a look at the below section in Preferences — look familiar?

That checkbox pointed to in red, has been there for a few years. But the key distinction is decoding, as compared to this new encoding feature. It’s a great example of Adobe botching another feature, for years, and never explaining it well. Besides always leaving the h in H.264 lower-case, Adobe never addressed whether this feature requires Intel internal graphics being enabled simultaneously with a discrete GPU — and they let the checkbox be enabled no matter what (rather than grayed out, as for encoding). What’s more, it’s been widely reported, by an evident majority, that the feature actually offers no recognizable benefit, while it’s also often sleuthed out to be the culprit for failed/crashed exports. All told, we shouldn’t confuse these features — and, the only one that makes a real difference is the newer Intel encoding hardware acceleration added this week. For once, Adobe really did something (and fingers crossed that it holds up).

If you have any questions about how to configure your computer for this new acceleration feature, let me know in the comments with a system description, and I’ll do my best to help.

I’m glad they are finally getting around to supporting this, but you are right, they didn’t do it in a great way. They should have had this be one of their headlining features of the update, and should have better documentation on how to make it work, and have windows support for HEVC. It makes no sense. They should also support NVENC as well instead of just quick sync for those who use xeon processors that don’t have integrated graphics.

Found this version extremely unstable. Just a warning to anyone editing on a current project. Avoid at all costs. If you already updated in the creative cloud app you can rollback to a previous version. I was stable until the upgrade this weekend. Now I crash every 10 minutes or less…

NVENC should be added into the mix. I’m finding HEVC conversion 2-4 times faster than realtime whereas Premier takes about 10x longer than realtime. Granted Premiere has to process the colour correction and audio, but still, doesn’t need to be that long. My method now is outout a high bitrate H.264 encode with Premiere, then I out that file through My FFmpeg, enable NVENC and convert to H.265. Much faster overall but it would be great to cut out the middle man.

To get NVENC in Premiere you can try Voukoder export plugin ( it is based on ffmpeg).

Also, here is potentially useful info about speeding up rendering:

https://forums.adobe.com/message/11103540

Works really like a charm on my edit Laptop with intel i7-7820HK intel HD 630 & GTX 1070

with nVidia optimus

The speed boost in exporting is amazing!!

Exporting

a 13sec Sony XAVC-S 4k clip

-Exported in H.264 High Quality 2160p 4K

Encoder settings – software = 41sec…

-Exported in H.264 High Quality 2160p 4K

Encoder settings – Hardware Accelerated = 13sec…

from 41… to 13 sec!!! —NICE!…..

This sooooo sped up my 4k exporting time!

Does this work when passed to the AME queue? Its confusing, since it also says Mercury GPU acceleration and the renderer.

Hi, i did a test exporting two videos in h.264 1080p 24fps, and the results favoured software enconding greatly over hardware encoding, since the latter gave a terrible digital noise as the final result. Am I missing something?

Thanks for the article. I have a new Alienware R7 Aurora with a Core i7 8700k Coffee Lake processor and Intel Z370 chipset. Can I enable the Quicksync H.264 hardware acceleration in my bios do you think? Where in the bios do I find that?

When I export, the GPU is sitting at 0. So I’m basically not getting any assistance from my expensive video card. How do I get this to kick in when I export (h.265) video on Adobe premiere?

Keep in mind, this is not about GPU acceleration from your installed card; this is about acceleration called QuickSync enabled inside certain Intel CPUs…

Well My head is under water Now coz I couldn’t even find ‘Encode Setting” under Export though I set all other stuffs like preference and console for hardware acceleration. My config is i5-7400 with MSI H110 and 8GB RAM but no dedicated GPU. Enabled the IGD too. All other softwares are using Quicksync without dGPU.Some say I need dGPU. Is that true or any other reason?

Thanks for that reply. I just loaded the BIOS and looked and don’t see any mention of internal graphics of any sort so perhaps my MOBO can’t do it. I’ll check further just to be sure, but thanks.

Hey how could i enable Hardware Accel? My Specs i7-7700HQ + GTX 1070, i only get Software Only .. I can’t choose for HW Accel , i’ve checked CPU listed above .. Does that Mobile Processor isn’t listed for HW Accel?

Thank You!

You guys do realize that Quicksync isn’t as good quality as cpu encoded. this has been done to death but generally speaking it’s better to use a lighter codec than quicsync as you’ll get better results and just as much a perf increase. You guys are giving the wrong impression here.

My buddy’s main editing rig is an i7-2600 system still, and my previous CPU i had was an i7-3770k (now i use a Xeon E5-1650v2, so no QuickSync). I also have a mini-ITX system i built using a laptop CPU, the i7-3610qm. I wonder if Adobe themselves has a supported QuickSync generation/CPU list as i’ve heard the iGPUs on the 2nd and 3rd gen CPUs weren’t exactly the best and had issues (which could mean only partial quicksync support, who knows). I may try seeing what renders faster, my 6 core ivy xeon or my laptop i7-3610qm lol. Do you know if Adobe has an official support list for this feature?

Hi Paul,

Thanks for your article, I’ve found it very useful and well written.

I’ve got very curious after reading it, because I’ve noticed that option in exporting window, and I was wondering what was referring to that “Hardware Acceleration”. Never did tests to explain the difference, because I was very busy in the last months.

Today I had some spare time and I decided to give a try.

My mobile (testing) machine is a Microsoft Surface Book, 1st gen with Intel Core i5 6300u, 8GB, dedicated GPU (which is comparable to a Geforce 940MX), with 1GB of DDR5.

It’s my favorite mobile PC for editing and photography work. Actually isn’t the latest spec machine on the market, but it perfectly suits my needs.

Of course it has an Intel HD520 integrated GPU, which is managed by Optimus (to switch the iGPU with the dedicated when needed).

Checking the link in the article confirmed that my CPU has Intel QuickSync support.

The main screen of the laptop is mainly managed by the iGPU, because the secondary GPU (Nvidia) is being used when some application needs more power (setup via Nvidia Control Panel).

So my motherboard and bios I supposed let me use both iGPU and dedicated, because the laptop screen is always updated by the Intel GPU.

In the bios there is, of course, no option for selecting favorite GPU.

In a simple test project, with a 16sec H.264 8 bit 1080p30 clip, I’ve got interesting results.

On the clip I’ve applied a 3D LUT (16x16x16) to test some color grading.

I’ve used AME to export the clip.

With Software acceleration the total encoding time was 31 seconds, and looking into Task Manager the Intel GPU was used about 7% average during the encoding.

After that I’ve tried with hardware acceleration enabled.

Surprinsingly (or not), the total encoding time was cut in half. Only 16 seconds to encode the same project, and the integrated GPU usage boosted to a 68% average, confirming the fact the Intel QuickSync is using the iGPU to encode.

It would be nice to try some more complex and longer project to see how gain I could get using this function.

Thanks again for your article!

If you need some screens or further infos feel free to ask.

Have a nice day

When I go to the same screen for preferences my window does not show the h.264 decoding option. I am currently using a GTX 1070 and i7-8700k, both of which are on the compatibilitiy list. Do you have any idea why?

I am currently updated to version 12.1.2 build 69.

@@focuspulling:disqus

Are you referring to using iGPU Multi-Monitor settings in the BIOS?

And if enabled with the other system requirements, do you know if this can be done with the CS5.5 version?

Just implemented the BIOS change (i7-7700k and Gigabyte z270x-Ultra Gaming) and the performance tab in task manager did indeed show a second GPU listed.

I would get an error only when trying to export as VBR, 2 Pass and it would kick back to software only. Outside of that, I did see a speed boost exclusively when rendering GPU-intense media, though sometimes the CPU just has to crank it out on it’s own.

Reading elsewhere in the comments, it seems that there is a bit of unanswerable speculation regarding output quality. My concern regarding quality is only allowing a single VBR pass, but maybe the second GPU is doing the second pass? I’m totally out of my depth on that one.

I ended up switching back to the single GPU setup – I’d rather not risk sacrificing quality for speed until I can test it myself.

I ran several exports and posted comparison and basic analysis on an Adobe Spark page, which you can see here: https://spark.adobe.com/page/wuYK0eakRzJUT/

I’d appreciate any thoughts or feedback you guys have.

It’s unclear what encoder settings were used, and what is final bitrate every file has as a result. Without this info the test is not helpful really.

I can’t get more than 40 Mbps bitrate in the actual file after exporting with hardware acceleration. No matter how high I set target bitrate, still get same results. I’m working with BMPCC4K footage with bitrates usually around 800 Mbps. After exporting, video looks like garbage… My only choice is software rendering is that correct?

So I have an i7-6700K, and after downloading and installing the Intel Graphics Driver, and going into BIOS and turning on “enable” for Internal Graphics, Premiere, After Effects, Davinci Resolve – none of them would launch. Premiere would give me an error about not having a video module. Resolve wouldn’t even give a launch screen, and After Effects would crash on launch. Thankfully I was able to roll back to an earlier version of Windows 10, and that reset everything. But it’s frustrating having a machine that Adobe won’t use to its full advantage…

wait! not possible in ryzen 1700 ? 😮

Great info! Any idea what the keyboard command is on the Mojave to get to the “Open Firmware” screen to adjust the BIOS settings? I can’t access it and I cannot find anything online about it. – Thanks!

GTX 1660 Ti here with a 9700K and I still have hardware acceleration grayed out. How do you get intel quick sync enabled via the BIOS to work in tandem with the GPU?

Please refer to the guidance presented above for better diagnosis of what you’re precisely running into; again, I wrote: “By default, the BIOS of most motherboards (a configuration screen you can access before booting into the OS) sets internal graphics to “Auto,” which actually means that if you have a dedicated GPU installed, Intel UHD/HD Graphics gets disabled. Some (but not all) motherboards allow you to force-enable internal graphics, and then it’s possible — though not guaranteed — you can boot into the OS with both internal and dedicated graphics acceleration active at the same time.”

Install “Voukoder” export plugin and forget about Intel’s hardware accel, you already have much better one. Or you may try “Cinergy Daniel” variant

Looks interesting; but I think you’re misunderstanding the parallel nature of this QuickSync technology, which leverages the second GPU that’s native on Intel processors to absorb the encoding task, while the more powerful nVidia or AMD GPU can do the heavy lifting on effects per usual. Voukoder and others divert GPU energy to encoding.

NVidia GPUs have dedicated Encoder/Decoder block inside the chip, it’s not used for any other tasks. Run GPU-Z for monitoring, there you can find a separate field: Video Engine. Check for yourself – it’s never loaded until you play video or run HW-encoding. So no worries about drop of CUDA-performance. Also, in Pascal/Turing GPUs encoder is definitely faster than any Intel variants to the date.

Thanks for adding to this diagnostic, it’s a really healthy inquiry in an area (I criticize above) Adobe does its typically arrogant and silent job of pretending like it’s not their business to explain.

Let’s focus on the way that QuickSync is an Intel CPU-integrated invention that is focused only on H.264 and H.265 (the first of which is of course the 99% codec of choice for exporting, let’s be real). Benchmarks have proven that it outperforms the fastest GPUs, given that narrow tailoring, even though the latest GPUs on the whole are more powerful at the full palette of effects. And again: we are talking about something that doesn’t HURT encoding speeds, but only helps. If you don’t have it, that’s worse than having it available for use. But sure, you can figure out other ways to speed up your encoding.